Omacast

An AI-powered podcast platform that turns your curiosity into a personalized series.

My Role

As a Design engineer, researching, designing, and building the platform end to end.

The Pitch

- Audience

- Lifelong learners and autodidacts who spend commutes and evenings on podcasts.

- Problem

- When a question sparks mid-commute, no podcast meets the moment. There’s no “French Revolution, beginner level, interview format.”

- Promise

- Bring your topic, set your format, and depth. We turn it into a real conversation.

- Channels

- 15 beta users to collect early feedback; shared in history and economics Reddit communities, and advertised on IG stories.

The Product

A podcast-style app that generates bespoke episodes on demand. Users bring a subject, set an angle and complexity level, and get an audio conversation with full transcript and notes.

Features:- A text field for custom subjects, plus a topic menu by category.

- A dynamic questionnaire that helps tailor series, format, and depth.

- A player that syncs script to audio and surfaces key terms in context.

The Build

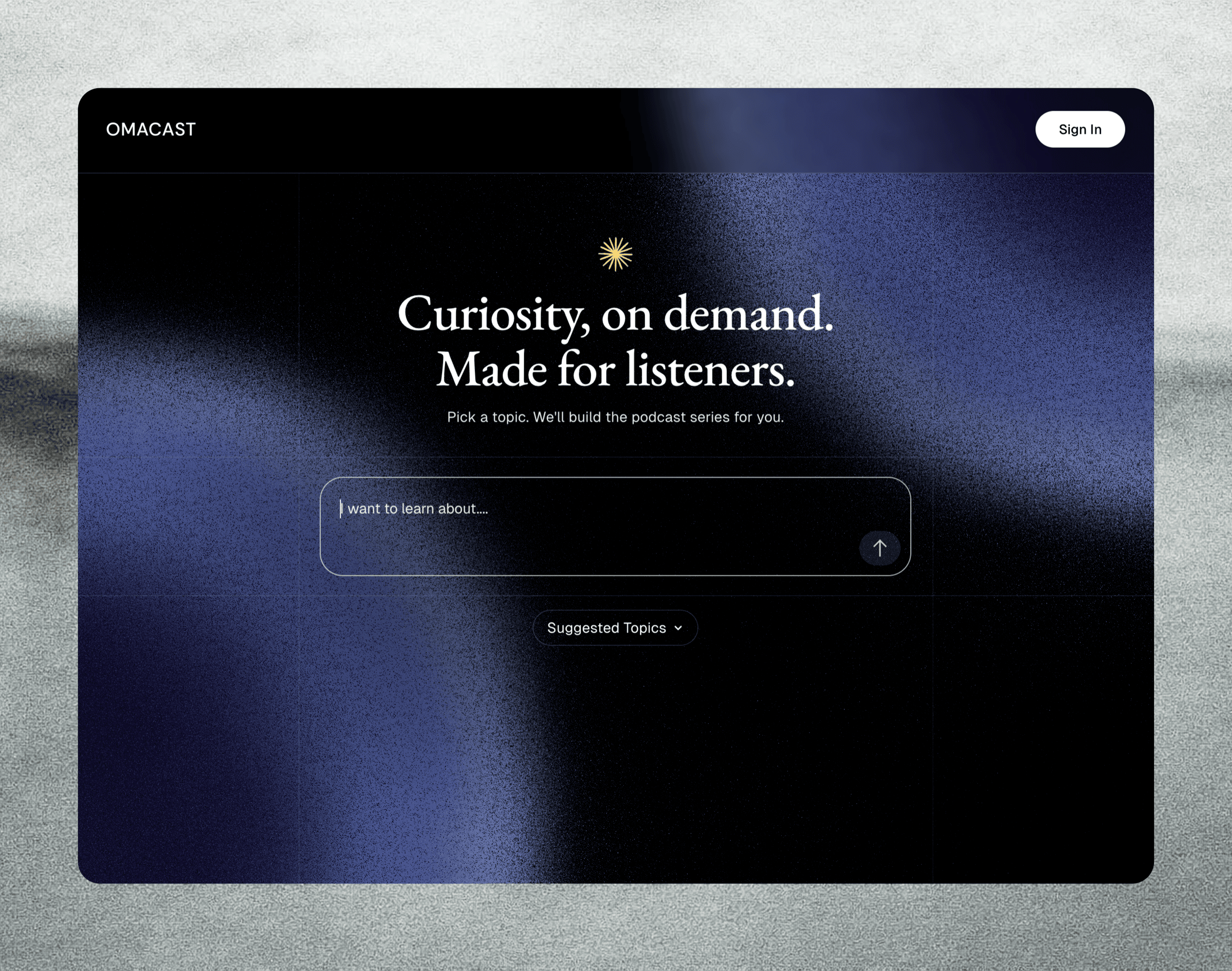

1. Homepage

The Experience

The homepage is one input. Users type a topic or pick a starter prompt grouped by discipline. The only decision is what to be curious about.

In the Background

A guest who lands on the homepage is silently signed in via Supabase’s anonymous auth, with a real user record and episode tied to them. No migration step needed when they create an account later.

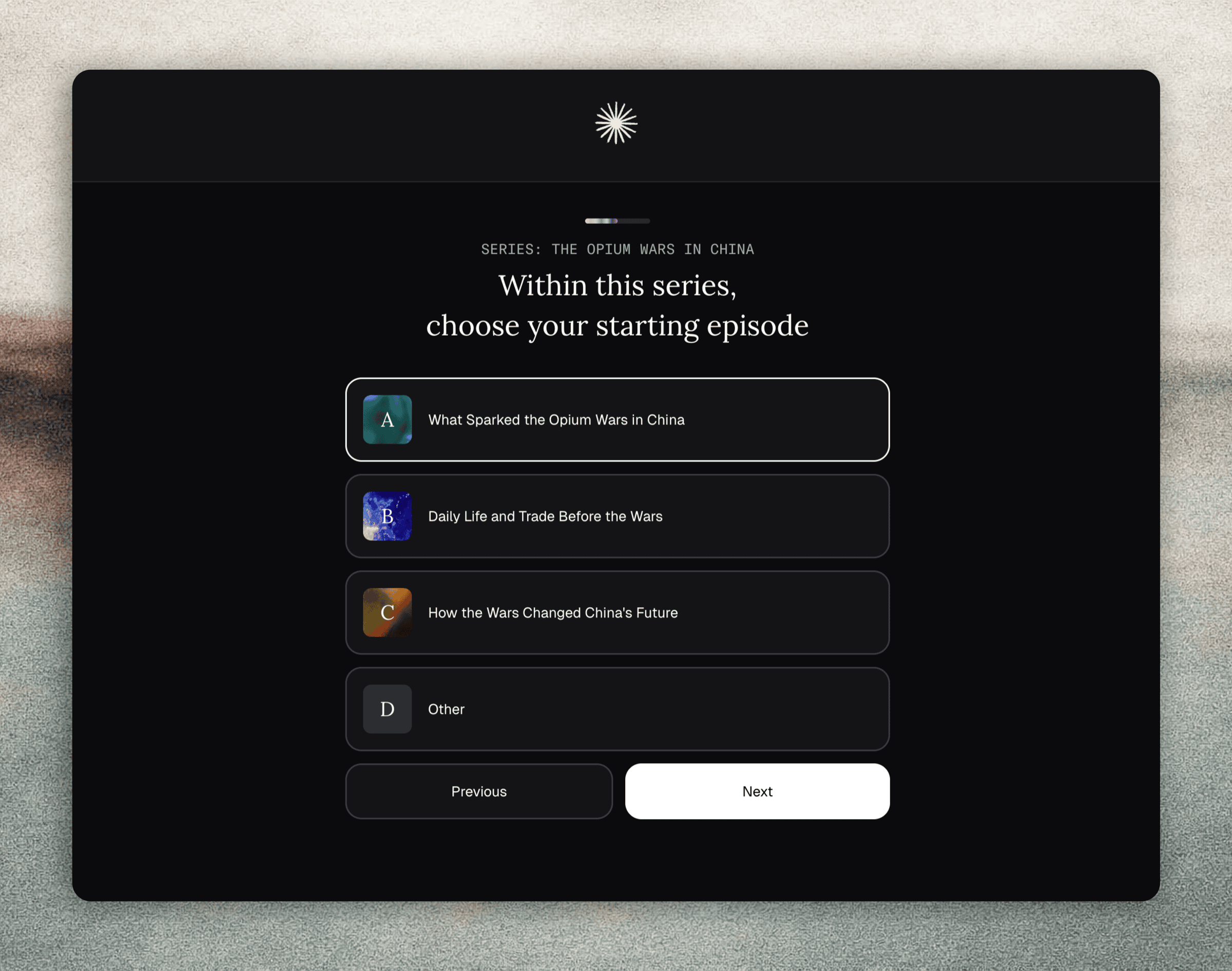

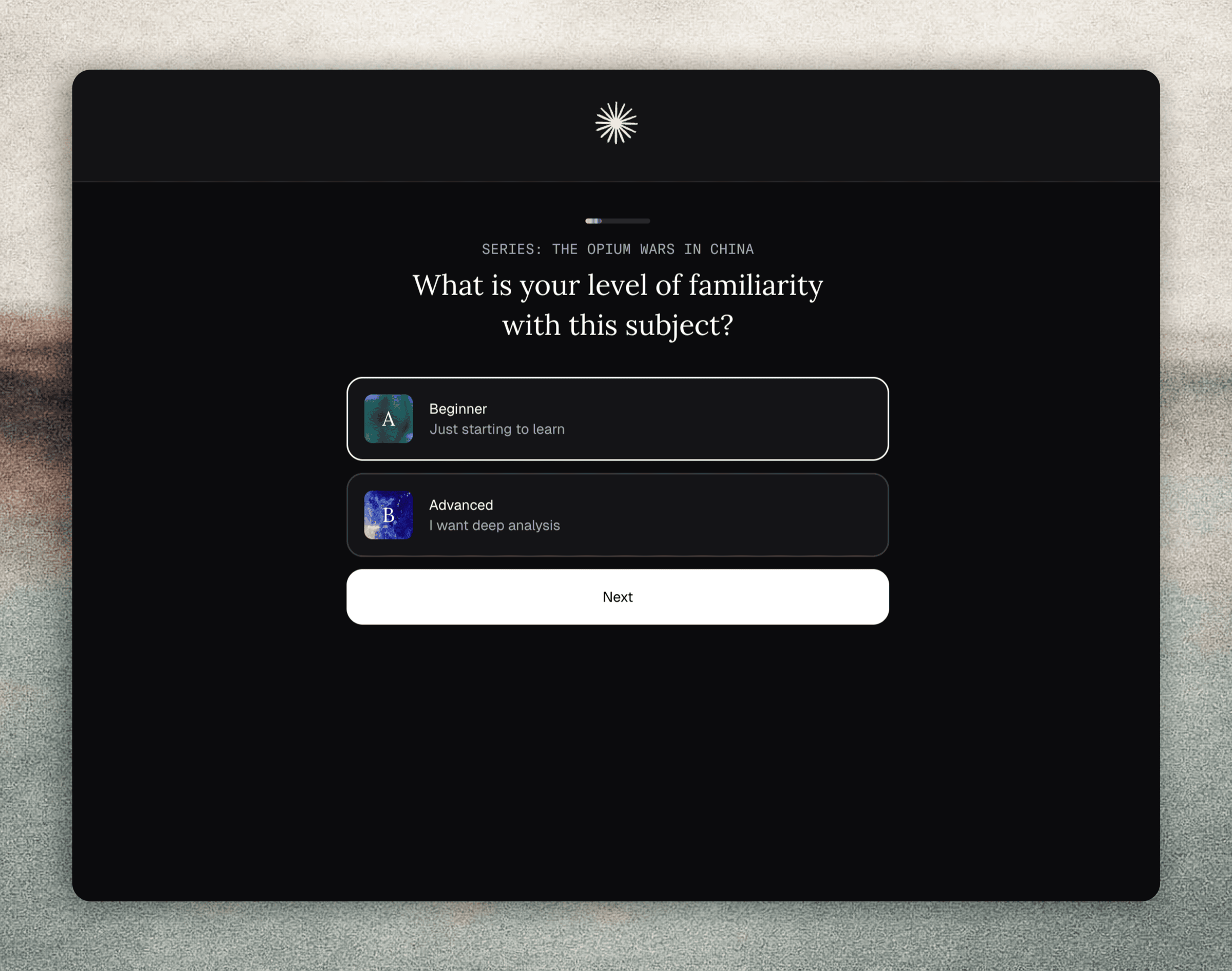

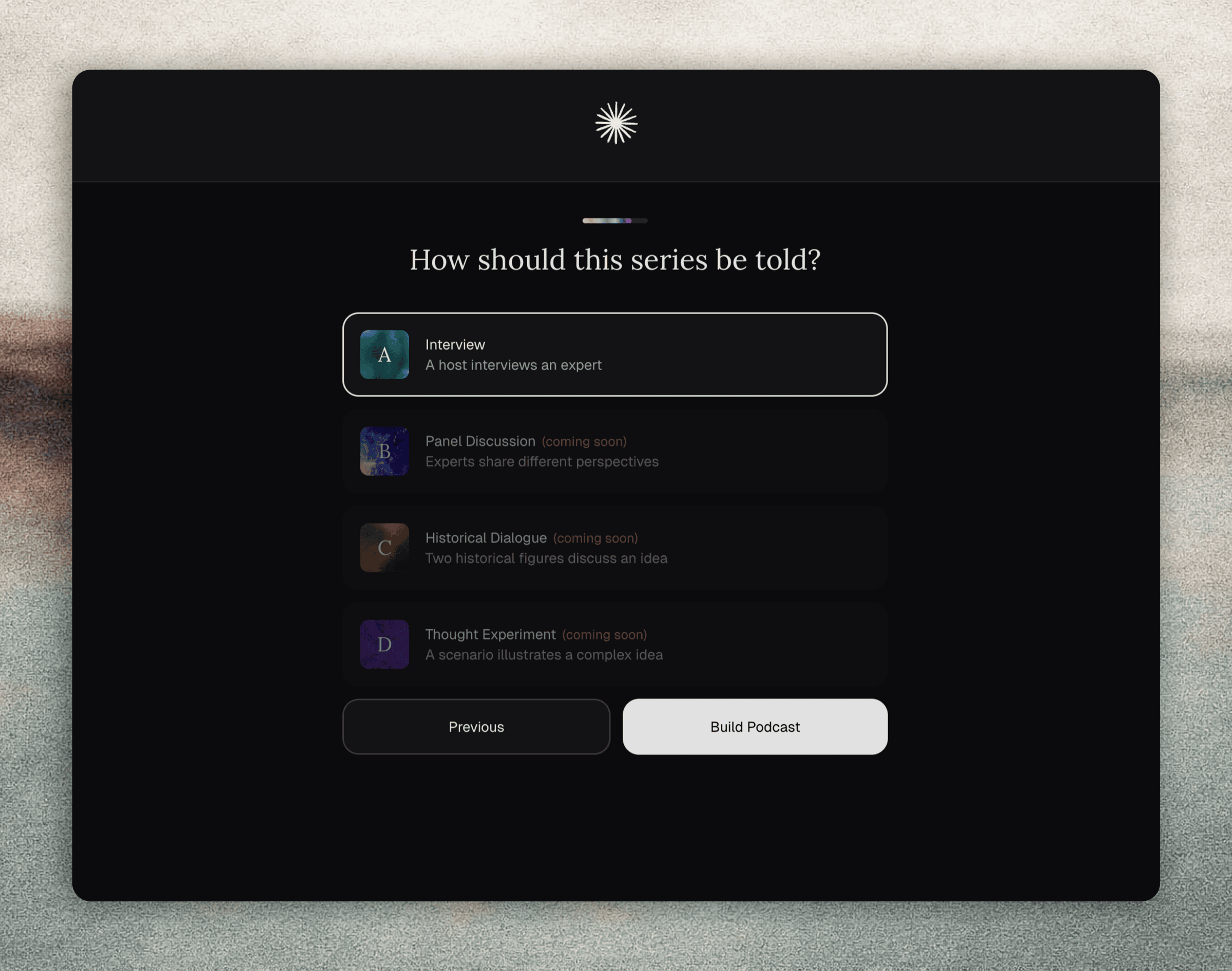

2. Questionnaire

The Experience

The user is walked through a few steps that shape the episode: scope, depth, title, and format. The dynamic flow adapts to the input, fast-tracking when the topic is clear, and offering refinements or regenerating options when it’s not.

In the Background

GPT-5 is called twice via the API: once to read the topic and either run with it or offer three sharper angles, then again if the user rejects the titles and asks for fresh ones.

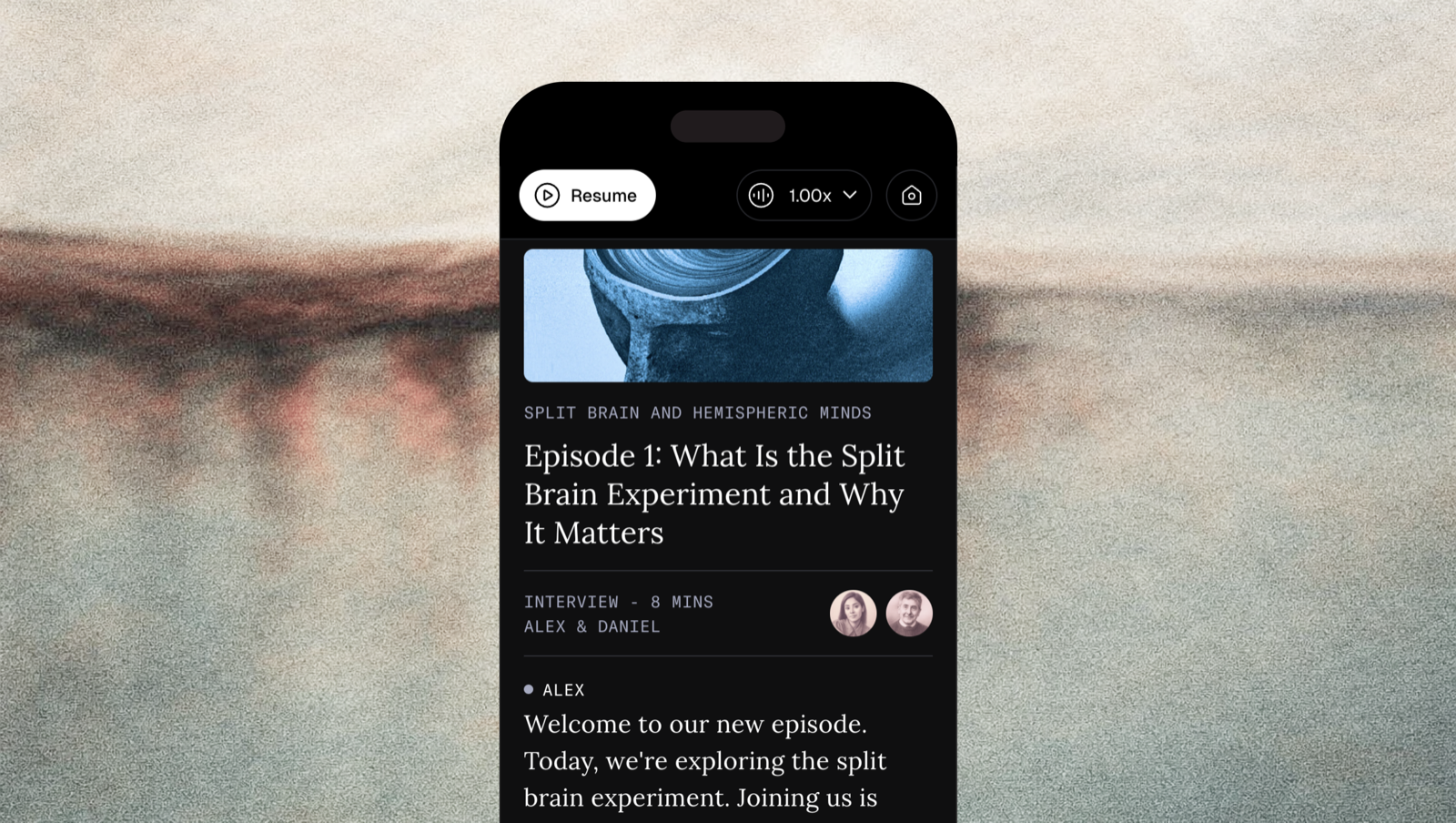

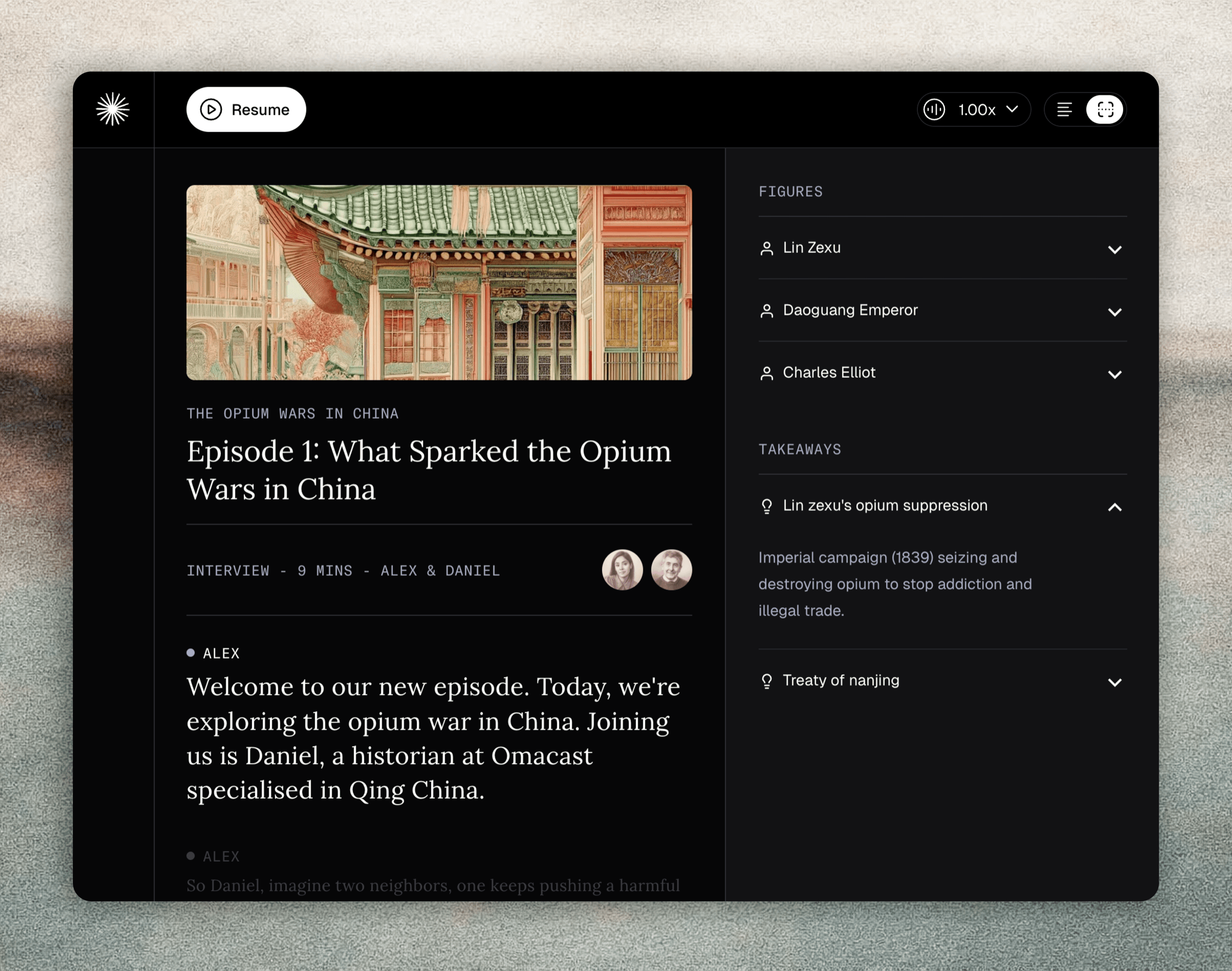

3. Episode Page

The Experience

The episode is built to be learned from. Audio starts automatically after a countdown, and the transcript scrolls in sync.

A side panel surfaces the people and concepts worth remembering from the episode. Highlighted and expanded as they’re mentioned, each with a short explanation.

At the end, two follow-up episodes are suggested by both the speakers and the UI, pulled from concepts that actually came up in the conversation.

In the Background

- The episode is tagged with an ID that doubles as its LangSmith trace, so every step downstream is linked to the same record.

- The script is written by OpenAI’s GPT-5 in a single call, both speakers, with inline emotion cues the voice model reads as inflection.

- The figures and takeaways are extracted by GPT-5-mini as structured JSON, so the side panel can be rendered straight from that JSON.

- The voices are synthesized by ElevenLabs eleven_v3, generated segment by segment and batched. The first five are produced upfront so playback starts instantly, and the rest are streamed as the user listens.

The Tech Stack

- Built with: Claude Code

- Framework: Next.js 15, React 19, TypeScript, Tailwind 4

- Hosting: Vercel

- Database: Supabase

- LLM for Conversation: OpenAI gpt-5.4

- LLM for Questionnaire & Metadata: gpt-5-mini

- TTS: ElevenLabs eleven_v3

- Payment: Stripe

- Email: Resend

- Observability: LangSmith

- Error tracking: Sentry

- Analytics: Mixpanel + Meta Pixel

Acknowledgements:

Built with the steady support of Remko Mak.

The Outcome

Launched the product from 0 to 1, leading end-to-end across product strategy, design, and technical implementation.

Tracked conversion across the full funnel in Mixpanel (Meta ads, landing page, episode creation, payment) to validate demand and prioritise iterations.